集群隔段时间就会出现某节点1883端口无法提供服务,控制台查看,大概总连接数 80w,在线连接数30w,控制台看节点连接为0.

EMQX 5.8.3开源版本

etc/emqx.conf

node {

name = “emqx@xxx1”

cookie = “xxxxxxxxxxxxx”

max_ports = 2097152

data_dir = “/emqx/data”

}

cluster {

name = emqxcl

autoheal = true

discovery_strategy = static

static {

seeds = [“emqx@xxx1”, “emqx@xxx2”,“emqx@xxx3”,“emqx@xxx4”,“emqx@xxx5”]

}

}

dashboard {

listeners {

http.bind = 18083

# https.bind = 18084

https {

ssl_options {

certfile = “${EMQX_ETC_DIR}/certs/cert.pem”

keyfile = “${EMQX_ETC_DIR}/certs/key.pem”

}

}

}

}

Fri Feb 27 13:43:13 控制台显示,节点连接数为0,telnet 节点1883端口不通,实际服务进程存在,ss -l 端口也存在。

日志

cat run_erl.log

run_erl [85660] Fri Feb 27 13:43:13 2026

Args before exec of shell:

run_erl [85660] Fri Feb 27 13:43:13 2026

argv[0] = sh

run_erl [85660] Fri Feb 27 13:43:13 2026

argv[1] = -c

run_erl [85660] Fri Feb 27 13:43:13 2026

argv[2] = exec “/usr/local/emqx-5.8.3/emqx-5.8.3/bin/emqx” “console”

cat emqx.log.14|grep -E ‘error|fail’|

2026-02-27T12:00:02.483722+08:00 [warning] msg: long_schedule, info: [{timeout,254},{in,{emqx_connection,recvloop,2}},{out,{erlang,port_command,3}}], procinfo: [{pid,<0.78328272.2>},{memory,22008},{total_heap_size,2586},{heap_size,2586},{stack_size,9},{min_heap_size,233},{proc_lib_initial_call,{emqx_connection,init,[‘Argument__1’,‘Argument__2’,‘Argument__3’,‘Argument__4’]}},{initial_call,{proc_lib,init_p,5}},{current_stacktrace,[{emqx_connection,recvloop,2,[{file,“emqx_connection.erl”},{line,411}]},{proc_lib,wake_up,3,[{file,“proc_lib.erl”},{line,251}]}]},{registered_name,},{status,waiting},{message_queue_len,0},{group_leader,<0.4176.0>},{priority,normal},{trap_exit,false},{reductions,8999775},{last_calls,false},{catchlevel,1},{trace,0},{suspending,},{sequential_trace_token,},{error_handler,error_handler}]

2026-02-27T12:00:02.493724+08:00 [warning] msg: long_schedule, info: [{timeout,255},{in,{emqx_connection,recvloop,2}},{out,{erlang,port_command,3}}], procinfo: [{pid,<0.67323392.2>},{memory,47424},{total_heap_size,5783},{heap_size,1598},{stack_size,9},{min_heap_size,233},{proc_lib_initial_call,{emqx_connection,init,[‘Argument__1’,‘Argument__2’,‘Argument__3’,‘Argument__4’]}},{initial_call,{proc_lib,init_p,5}},{current_stacktrace,[{emqx_connection,recvloop,2,[{file,“emqx_connection.erl”},{line,411}]},{proc_lib,wake_up,3,[{file,“proc_lib.erl”},{line,251}]}]},{registered_name,},{status,waiting},{message_queue_len,0},{group_leader,<0.4176.0>},{priority,normal},{trap_exit,false},{reductions,19910},{last_calls,false},{catchlevel,1},{trace,0},{suspending,},{sequential_trace_token,},{error_handler,error_handler}]

2026-02-27T12:00:02.494906+08:00 [warning] msg: long_schedule, info: [{timeout,296},{in,{emqx_connection,recvloop,2}},{out,{erts_internal,await_result,1}}], procinfo: [{pid,<0.76308925.2>},{memory,34688},{total_heap_size,4191},{heap_size,2586},{stack_size,9},{min_heap_size,233},{proc_lib_initial_call,{emqx_connection,init,[‘Argument__1’,‘Argument__2’,‘Argument__3’,‘Argument__4’]}},{initial_call,{proc_lib,init_p,5}},{current_stacktrace,[{emqx_connection,recvloop,2,[{file,“emqx_connection.erl”},{line,411}]},{proc_lib,wake_up,3,[{file,“proc_lib.erl”},{line,251}]}]},{registered_name,},{status,waiting},{message_queue_len,0},{group_leader,<0.4176.0>},{priority,normal},{trap_exit,false},{reductions,61351},{last_calls,false},{catchlevel,1},{trace,0},{suspending,},{sequential_trace_token,},{error_handler,error_handler}]

2026-02-27T12:00:38.491105+08:00 [warning] msg: alarm_is_activated, message: <<“resource down: #{error => timeout,status => disconnected}”>>, name: <<“action:http:action-data-mqtt-webhook:connector:http:data-mqtt-webhook”>>

2026-02-27T12:00:40.084149+08:00 [error] tag: ERROR, msg: send_error, id: <<“action:http:action-data-mqtt-webhook:connector:http:data-mqtt-webhook”>>, reason: {recoverable_error,<<“channel: "action:http:action-data-mqtt-webhook:connector:http:data-mqtt-webhook" not operational”>>}, rule_id: <<“rule-data-mqtt-conn”>>, rule_trigger_ts: [1772164840083]

2026-02-27T12:00:43.110512+08:00 [error] tag: ERROR, msg: send_error, id: <<“action:http:action-data-mqtt-webhook:connector:http:data-mqtt-webhook”>>, reason: {recoverable_error,<<“channel: "action:http:action-data-mqtt-webhook:connector:http:data-mqtt-webhook" not operational”>>}, rule_id: <<“rule-data-mqtt-conn”>>, rule_trigger_ts: [1772164843110]

2026-02-27T12:00:53.428459+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: health_check_failed, status: disconnected, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:01:56.442542+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:02:59.468837+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:04:02.486537+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:05:05.503984+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:06:08.523913+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:07:11.545412+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:08:14.564619+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:09:17.583393+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:10:20.601825+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:11:23.620453+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:12:26.637630+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:13:29.654618+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:14:32.669699+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:15:35.684527+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:16:00.260417+08:00 [error] msg: gen_rpc_error, error: channel_error, driver: tcp, reason: econnreset, socket: #Port<0.4977228>, peer: xxx4:22156, action: stopping

2026-02-27T12:16:00.261771+08:00 [error] msg: gen_rpc_error, error: channel_error, driver: tcp, reason: econnreset, socket: #Port<0.6024>, peer: xxx3:50072, action: stopping

2026-02-27T12:16:00.270762+08:00 [error] msg: gen_rpc_error, error: channel_error, driver: tcp, reason: econnreset, socket: #Port<0.2929>, peer: xxx1:33516, action: stopping

2026-02-27T12:16:00.275601+08:00 [error] msg: gen_rpc_error, error: channel_error, driver: tcp, reason: econnreset, socket: #Port<0.20361877>, peer: xxx2:10964, action: stopping

2026-02-27T12:16:38.697371+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:17:41.711269+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:18:44.725090+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:19:47.738810+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:20:50.752054+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:21:53.767001+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:22:56.781192+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:23:59.793906+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:25:02.806316+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:26:05.822210+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:27:08.834347+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:28:11.846080+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:29:14.860669+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:30:17.875051+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:31:20.887264+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:32:23.899953+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:33:26.911632+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:34:29.924149+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:35:32.937222+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:36:35.950162+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:37:38.966214+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:38:41.980905+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:39:44.993752+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:40:48.007906+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:41:51.019170+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:42:54.032309+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:43:57.044914+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:45:00.055717+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:46:03.067954+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:47:06.080320+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:48:09.093335+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:49:12.105988+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:50:15.117804+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:51:18.129112+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:52:21.143658+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:53:24.157436+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:54:27.169462+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:55:30.182104+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:56:33.195554+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:57:36.208274+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:58:39.219898+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T12:59:42.231990+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:00:45.244976+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:01:48.256638+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:02:51.268146+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:03:54.280472+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:04:57.292479+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:06:00.304321+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:07:03.316875+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:08:06.328358+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:09:09.341176+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:10:12.352893+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:11:15.366648+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:12:18.379281+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:13:21.393219+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:14:24.407639+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:15:27.420557+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:16:30.432006+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:17:33.444016+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:18:36.457170+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:19:39.468926+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:20:42.482274+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:21:45.495848+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:22:48.507179+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:23:51.518504+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:24:54.531961+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:25:57.544536+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:27:00.557138+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:28:03.569987+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:29:06.581797+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:30:09.595576+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:31:12.607853+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:32:15.620178+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:33:18.632433+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:34:21.644690+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:35:24.656719+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:36:27.668584+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:37:30.679945+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:38:33.693120+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:39:36.705851+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:40:39.717861+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:41:42.729022+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:42:45.741022+08:00 [warning] tag: CONNECTOR/WEBHOOK, msg: start_resource_failed, reason: timeout, resource_id: <<“connector:http:data-mqtt-webhook”>>

2026-02-27T13:57:28.093645+08:00 [error] Process: <0.238030.0> on node ‘emqx@xxx5’, Context: maximum heap size reached, Max Heap Size: 6291456, Total Heap Size: 7545111, Kill: true, Error Logger: true, Message Queue Len: 0, GC Info: [{old_heap_block_size,2984878},{heap_block_size,4560232},{mbuf_size,6037},{recent_size,873792},{stack_size,9},{old_heap_size,0},{heap_size,2066797},{bin_vheap_size,589758},{bin_vheap_block_size,832883},{bin_old_vheap_size,0},{bin_old_vheap_block_size,440983}]

2026-02-27T14:09:50.270273+08:00 [error] Process: <0.528436.0> on node ‘emqx@xxx5’, Context: maximum heap size reached, Max Heap Size: 6291456, Total Heap Size: 10865073, Kill: true, Error Logger: true, Message Queue Len: 0, GC Info: [{old_heap_block_size,4298223},{heap_block_size,6566731},{mbuf_size,7240},{recent_size,1604225},{stack_size,29},{old_heap_size,0},{heap_size,2977757},{bin_vheap_size,1089380},{bin_vheap_block_size,2180423},{bin_old_vheap_size,0},{bin_old_vheap_block_size,713510}]

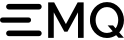

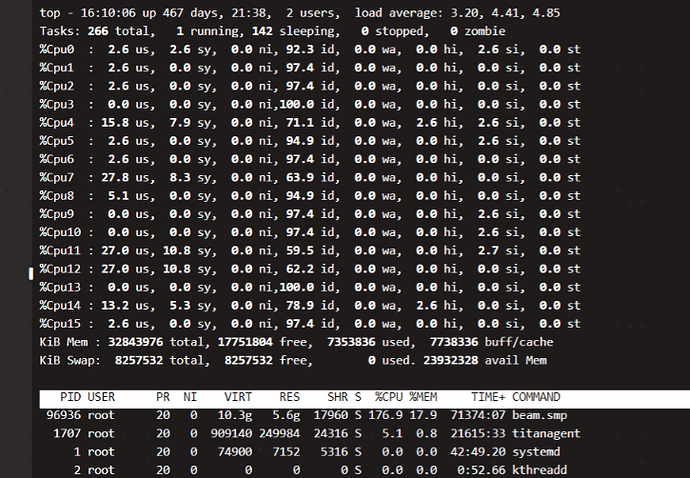

服务器信息:

Red Hat Enterprise Linux Server release 7.9 (Maipo)

32G16C

uname -a

5.4.17-2136.312.3.4.el7uek.x86_64 #2 SMP Wed Oct 19 17:45:00 PDT 2022 x86_64 x86_64 x86_64 GNU/Linux

/etc/sysctl.conf

fs.file-max = 1048576

net.ipv4.tcp_keepalive_time = 1200

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_sack = 1

net.ipv4.tcp_window_scaling = 1

net.ipv4.tcp_rmem = 4096 87380 4194304

net.ipv4.tcp_wmem = 4096 16384 4194304

net.ipv4.tcp_max_syn_backlog = 16384

net.core.netdev_max_backlog = 32768

net.core.somaxconn = 32768

net.core.wmem_default = 8388608

net.core.rmem_default = 8388608

net.core.rmem_max = 16777216

net.core.wmem_max = 16777216

net.ipv4.tcp_timestamps = 1

net.ipv4.tcp_fin_timeout = 30

net.ipv4.tcp_synack_retries = 2

net.ipv4.tcp_syn_retries = 2

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_tw_reuse = 1

#net.ipv4.tcp_mem = 94500000 915000000 927000000

#net.ipv4.tcp_max_orphans = 3276800

net.ipv4.ip_local_port_range = 1024 65000

#net.nf_conntrack_max = 655350

#net.netfilter.nf_conntrack_max = 655350

#net.netfilter.nf_conntrack_tcp_timeout_close_wait = 60

#net.netfilter.nf_conntrack_tcp_timeout_fin_wait = 120

#net.netfilter.nf_conntrack_tcp_timeout_time_wait = 120

#net.netfilter.nf_conntrack_tcp_timeout_established = 3600

vm.swappiness=10

/etc/systemd/system.conf

#增加

DefaultLimitCORE=infinity

DefaultLimitNOFILE=1048576

DefaultLimitNPROC=120000

/etc/security/limits.conf

- soft nproc 65535

- hard nproc 65535

- soft nofile 1048576

- hard nofile 1048576